For an entire industry that defines itself based on the word "quality", today there is still no agreed upon standard for what classifies HD quality video on the web....If the industry wants to progress with HD quality video, we're going to have to agree on a standard - and fast.He's absolutely right. Many companies attempt to pass off 480p as HD video, but most video enthusiasts would reject such an assertion--after all, if it isn't HD for an analog signal, why would it be HD for a digital signal? Likewise, lots of video is encoded at an unacceptably low bit rate which results in obvious artifacts. Why would such poor quality video be considered "high definition?"

Wikipedia's definition for High-definition television is a decent start:

High-definition television (or HDTV) is a digital television broadcasting system with higher resolution than traditional television systems (standard-definition TV, or SDTV). HDTV is digitally broadcast; the earliest implementations used analog broadcasting, but today digital television (DTV) signals are used, requiring less bandwidth due to digital video compression.This is still lacking. What exactly is "higher resolution than traditional television systems?" And just what SDTV system, since there were many of them? And is resolution all there is to it? What if I encode video to 1080p but at a horrible bit rate, which causes lots of blocking artifacts? What about video with an odd aspect ratio, where the number of verticals lines doesn't pass muster? Clearly this definition is lacking.

Some aspects of creating a standard are fairly straight-forward: most people seem to be fairly comfortable with 720p being the "minimum" resolution at which video can be encoded to. Ben Waggoner had an interesting proposal where 720p was acceptable, but it was also acceptable to generalize it to anything with "at least 16 million pixels per second," which takes into account both framerate and resolution. He also brought up the issue of using horizontal resolution as a criteria, since not everything is 16:9.

But on the question of "quality," most simply punted, and I find this odd. Ben Waggoner mentions:

Hassan Wharton-Ali brought up another good point on the thread - HD should actually be HD quality. It can’t be a lousy, over-quantized encode using a suboptimally high resolution just so it can be called HD.

A good test is the video should look worse (due to less detail), not better (due to less artifacts), if encoded at a lower resolution at the same data rate. If reducing your frame size makes the video look better when scaled to the same size, then the frame size is too high!

It is a good point, and I don't completely disagree with Ben's proposal--it should look worse due to less detail if encoded at a lower resolution. But this is the crux of the issue: what does it mean to look worse? Is this just a subjective judgment call on behalf of the person encoding the video? I don't think this addresses the problem of having a minimum acceptable "quality" for HD video.

Dan Rayburn's suggestion is even less desirable, in my opinion:

To me, the term HD should refer to and be defined by the resolution and a minimum bitrate requirement. Since you could have a 1080p HD video encoded at a very low bitrate, which could result in a poor viewing experience inferior to that of a higher-bitrate video in SD resolution, the resolution and bitrate is the only way to define HD.

The first issue with this is the "minimum bit rate" requirement would have to somehow scale with the resolution and frame rate. It would have to account for the codec being used. This would result in an impossibly complicated system, endless arguments, etc. (for example, would we impose the same bit rate requirement on H.264 as we would on VC1? What about "future" codecs?)

A bigger issue is not all video content is the same. The resulting "quality" of a video encoded to a given bitrate absolutely has a relationship with the video being encoded. A video with very little movement can often be encoded with a low bit rate and look fantastic, so the bit rate requirement would essentially amount to wasted bandwidth. Conversely, a video with a lot of motion and scene changes may require a lot more bits to get an acceptable, block-free viewing experience--and it's not clear what that acceptable threshold would be.

I find both of these suggestions insufficient. I propose an alternative: objective video quality algorithms. The idea is straight-forward: by comparing the source material with the output material, we can objectively establish a score that at least has some meaningful relationship with Mean Opinion Scores. In a nutshell, a MOS is how "good" the average person thinks some piece of video appears.

Peak Signal-to-Noise/Mean Squared Error is the most common algorithm, albeit one that is quite crude, widely considered to be deficient by most engineers and scientists. But it's 2009, baby--we can do better. We have better.

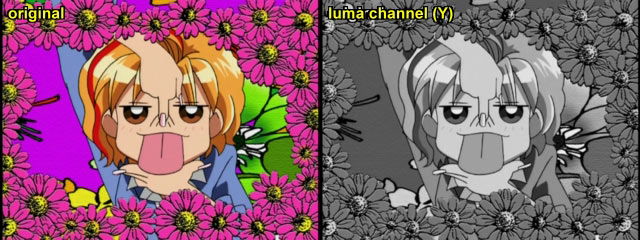

My suggestion would be the Structural SIMilarity Index, which is relatively inexpensive (its closely related brother, MSSIM, is much more pricey) and definitely correlates better with MOS.

How would this work?

- During the encode process, a SSIM score is computed for each frame using the input as a reference image.

- This process is repeated for every input frame, and every output frame.

- The lowest observed SSIM score is the resulting quality score for that piece of encoded video. (I suppose another alternative is to use the average. Yet another option is to use the variance. I'd avoid the median, since it's robust against outliers, and outliers matter)

- If the lowest observed SSIM score is less than some threshold, then the video cannot be considered High Definition.

This is a graph of SSIM over time, displaying multiple bit rates. The x-axis is frame number, and the y-axis is SSIM score. My input was a VGA, 30 FPS, ~30 second raw-RGB video clip. Each line corresponds with a bit rate requested of the encoder (x264's H.264 implementation, using a baseline profile--you can see this by the low SSIM scores at the beginning of the video due to single-pass encoding). Notice the clear relationship between SSIM scores and bit rate. Also note how much variance there is in video quality: clearly certain portions of this clip are "more difficult" to encode, and this results in a degradation of video quality. Also, notice a clear law of diminishing returns: as more and more bits are thrown at the video clip, the SSIM scores converge on 1.0--SSIM at 2 mbit/sec aren't substantially different from the SSIM scores at 500 kbit/sec.

There are a few gotchas with this plan: what if we're changing the frame rate (e.g. 3:2 pulldown) and there is no clear reference frame to which we compare the output? How do we determine the SSIM threshold? Do we really want to use SSIM, or is some other algorithm better?

The first question is answered relatively easy: we compare only what was input to the encoder and the resulting output. Presumably the process of manipulating the frame rate is separate from the process of encoding. What we're talking about is how well of a job our encoder does matching the input.

The second question is easier, but it requires someone conducting subjective video quality assessment tests to determine what threshold corresponds with a baseline SSIM number. In effect, someone has to do some statistical analysis on data captured during viewing sessions of actual people watching actual footage encoded with an actual compression algorithm, and determine a threshold that correlates well with people's perception of "High Definition." But at least with SSIM, this is a manageable process: once a threshold is determined, it's really independent of a whole slew of factors, like codec, the video being encoded, etc.

Let's say we decide that any SSIM score below 0.9 invalidates the video from being called "high definition"--for the above video, this would mean 500 kbps would be just slightly too poor to call HD (notice the poor quality at the beginning of the clip). And 1000 kbps would be more than acceptable.

Lastly, even though PSNR is an outdated method, I see no reason a high-definition standard could not include metrics for both objective quality tests. There are other objective video quality algorithms, and certainly more will be developed in the future, so any standard should be open to extension at a later date.

I don't really care what objective metric is used, and certainly there is plenty of debate over which objective method correlates best with MOS, and what threshold should be used--but let's at least be scientific about this. If there's going to be a "standard" for High Quality video, then let's choose a standard that will carry us forward and not create a quagmire.